Let’s Encrypt is an awesome free, automated and open way of protecting your site with https. As you may have noticed this site is using Let’s Encrypt certificate and I’ve started rolling it out to all my other sites too. With free https certificate there’s really no excuse not to use https only. In fact if you want to take advantage of HTTP/2 you’ll need https since no one currently supports it unencrypted even though the spec doesn’t mandate it.

Even if your site doesn’t have any sensitive information if you ever update/login to it from from a untrusted location such as Café your login credential might get disclosed to someone malicious and like most of us you’ll probably use the same credentials in multiple places that might be a real bad thing. Now I didn’t come up with all these steps I’m about to explain here but the credit rather goes to Bjørn Johansen whose blog posts I’ll summarise here. I’ll link all the posts I used as initial reference to set this up on my server at the end of the post in case you’ll need more details. Let’s Encrypt support for Nginx is still experimental and buggy so you’ll need to use manual method to install it.

Setting up Let’s Encrypt client

We’ll use git to get the client and bc is needed later so in Ubuntu/Debian you’ll install them with apt.

apt-get install git bc

Now with git we’ll clone the Let’s Encrypt client repo.

git clone https://github.com/letsencrypt/letsencrypt /opt/letsencrypt

Preparing Nginx

To verify the domain Let’s Encrypt verification server will look for verification files created by the client in a subdirectory of your webroot under: /.well-know/acme-challenge/

Since I have lots of sites under the same Nginx and I want them all to use https eventually I’ve created a configuration snippet under /etc/nginx/global named letsencrypt-challenge.conf with the following content:

# Allow access to the ACME Challenge for Let’s Encryptlocation ~ /\.well-known\/acme-challenge {allow all;}

This is not required if you don’t block files starting with a dot.

The server section for the site could look something like this:

server {

listen 80;

server_name example.com;

root /var/www/example.com;

include global/letsencrypt-challenge.conf;

}Once you’ve added the global/letsencrypt-challenge.conf in don’t forget to reload your nginx.

service nginx reload

Get the certificate from Let’s Encrypt

Now you are ready to use the Let’s Encrypt client to request a certificate for your domain.

/opt/letsencrypt/letsencrypt-auto certonly --agree-tos --webroot -w /var/www/example.com \

-d example.com

If all goes well you’ll get four files under /etc/letsencrypt/live/example.com. Those files are privkey.pem, cert.pem, chain.pem and fullchain.pem. You’ll need those to setup ssl in Nginx but before we do that let’s make sure the certificate is automatically renewed because it will be valid only 90 days.

Setup auto renew for certificate

So like I just mentioned the certificates from Let’s Encrypt are only valid for 90 days and I’m sure you don’t want to try to remember to renew them manually so we’ll setup a cron job to do that automatically for us. There’s already a nice script that will do all the heavy lifting for us. Well just need to download it and make it executable for root.

curl https://gist.githubusercontent.com/bjornjohansen/aaf0d29f225ffd1ea222/raw/e1b4bec81d32dba86e2d4e9d70a2b9f4d6cca773/le-renew.sh > /opt/le-renew.sh

chown root:root /opt/le-renew.sh

chmod 0500 /opt/le-renew.shPlease note that this script assumes you installed Let’s Encrypt client in /opt/letsencrypt if you didn’t please adjust the path in the script. It’s also good idea to try to understand what the script does and not just blindly execute any script you’ve downloaded from the web as root.

The script tries to renew the certificate for you when the expiration date is less than 30 days away. Well setup cron to run the script once a week so even if it fails for some reason there’s still plenty of time to get it right. Create a file /etc/cron.d/letsencrypt-renew with following content:

32 5 * * 1 root /opt/le-renew.sh example.com /var/www/example.com > /dev/null 2>&1

Setup https in Nginx

In order to https you’ll need a new server block that listens the port 443 and you’ll need to tell nginx where the private key and certificate are found for this domain.

server { listen 443 ssl; server_name example.com; root /var/www/example.com; ssl_certificate /etc/letsencrypt/live/example.com/fullchain.pem;ssl_certificate_key /etc/letsencrypt/live/example.com/privkey.pem;include global/letsencrypt-challenge.conf; }

That is what is required at the minimum but we are not going to stop there as there are six more steps we can take to make it more secure and optimize it’s https performance.

1) Connection credential caching

Most of the https overhead is in the initial connection setup and by caching the parameters we’ll significantly improve subsequent requests. All you need is following lines in your config:

ssl_session_cache shared:SSL:20m; ssl_session_timeout 60m;

This creates a shared cache between all the worker processes. 1MB cache can store around 4000 sessions so this should be plenty for most sites. You can adjust it smaller if you are concerned but Nginx should be smart enough not to consume all memory just for the cache.

2) Disable SSL

This may seem counterintuitive but https is actually SSL (secure socket layer) and TLS (transport layer security). Technically SSL has been superseded by TLS and SSL shouldn’t be used because of many weaknesses it has. Disabling SSL means you are making your site not accessible by IE6 but do you really care about that.

The latest version of TLS is 1.2 but there are still modern browsers that only support 1.0 so we should also support it. Just add following line to you nginx config:

ssl_protocols TLSv1 TLSv1.1 TLSv1.2;

3) Optimize cipher suites

Encryption is at the core of https and some of the ciphers are more secure and some are not secure at all anymore so we’ll want to tell the client the preferred order of cipher suites to use. All of the ciphers on this list use forward secrecy but with this list you’ll loose support for all IE versions on Windows XP but again do you really care.

ssl_prefer_server_ciphers on; ssl_ciphers ECDH+AESGCM:ECDH+AES256:ECDH+AES128:DH+3DES:!ADH:!AECDH:!MD5

4) Generate DH parameters

DH parameters affect the Diffie-Hellman key exchange which where client and server negotiate the key for the session. By default it’s only 1024 bit key and our Let’s Encrypt key is 2048 bits so we need to make Nginx also use 2048 bits for DH key exchange otherwise it’s not as secure as it could be. The only downside is that Java 6 doesn’t support anything over 1024 but again do you really care about that.

Generate the DH parameters file with 2048 bit long prime.

openssl dhparam 2048 -out /etc/nginx/cert/dhparam.pem

Add the dhparam to your config file:

ssl_dhparam /etc/nginx/cert/dhparam.pem;

5) Enable OCSP stapling

When a proper browser is presented with a certificate it will check to see if that certificate is revoked from the issuer and that adds extra overhead. This is where Online Certificate Status Protocol (OCSP) comes to rescue. The web server contacts the certificate authority’s OCSP server at regular interval to get a signed response which it then staples on the handshake when the connection is setup. This is much more efficient than having the browser go out to do the check.

To make sure the response from the CA is not tampered with nginx needs to check the CA root and intermediate certificates. Let’s Encrypt client already provides us with all the required certificates so all we need to do is configure stapling and the ssl_trusted_certificate.

ssl_stapling on; ssl_stapling_verify on; resolver 8.8.8.8 8.8.4.4; ssl_trusted_certificate /etc/letsencrypt/live/example.com/chain.pem;

The resolver is a must and you can use Google public DNS servers as I’ve used here or you can use your own.

6) Strict transport security

HTTP Strict Transport Security (HSTS) is a way to tell the browser that this domain should only be used over https. Even though you might setup redirection from http to https any requests that go over http are insecure. This feature is supported in all modern browsers and it’s really simple to enable you’ll just add a header Strict-Transport-Security with the maximum age. Then for the specified amount of time the browser doesn’t even try to access the site via http.

add_header Strict-Transport-Security "max-age=31536000" always;

Putting it all together

That’s a lot of configuration so here is a complete example configuration:

server { listen 443 ssl; server_name example.com; root /var/www/example.com; ssl_certificate /etc/letsencrypt/live/example.com/fullchain.pem;ssl_certificate_key /etc/letsencrypt/live/example.com/privkey.pem;include global/letsencrypt-challenge.conf; ssl_session_cache shared:SSL:20m; ssl_session_timeout 60m; ssl_protocols TLSv1 TLSv1.1 TLSv1.2;ssl_prefer_server_ciphers on; ssl_ciphers ECDH+AESGCM:ECDH+AES256:ECDH+AES128:DH+3DES:!ADH:!AECDH:!MD5 ssl_dhparam /etc/nginx/cert/dhparam.pem; ssl_stapling on; ssl_stapling_verify on; resolver 8.8.8.8 8.8.4.4; ssl_trusted_certificate /etc/letsencrypt/live/example.com/chain.pem; add_header Strict-Transport-Security "max-age=31536000" always; }

Optional steps

What you most likely want to do is redirect from http to https. That is done by replacing your old server with following:

server {

listen 80;

server_name example.com;

root /var/www/example.com;

return 301 https://$host$request_uri;

}Since you have https setup you might want to enable HTTP/2 if you are using new enough Nginx. That is very simple you just add the word http2 after ssl in the listen like this:

listen 443 ssl http2;But if you are running an older nginx you can still enable SPDY which has been superseded by HTTP/2 but it might still be useful until you can enable HTTP/2. SPDY is enabled similarly to HTTP/2.

listen 443 ssl spdy;Test your configuration

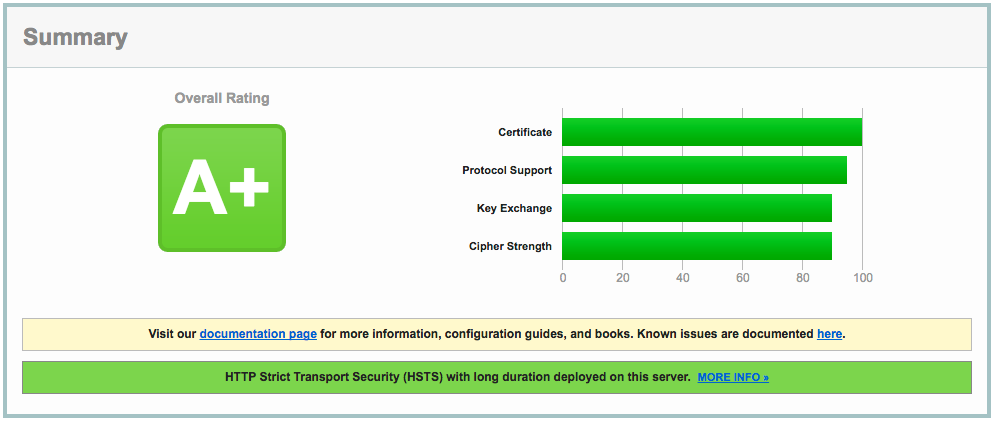

So how do you know you configured everything correctly. The site might be working in your browser but that still doesn’t guarantee everything is correct. Qualys SSL Labs provides a nice scanner to test your setup. If you configured everything correctly you should get A+ rating just as is shown below for this site.

References:

[1] Let’s Encrypt for Nginx

[2] Optimizing HTTPS on Nginx

Default admin user

Everyone knows the default admin user [email protected] and some attacks have taken advantage knowing this user and even it’s userid which is predictable. What I would suggest is not only to change the email address and screenname of this user but actually create a completely new admin user and remove this user.

Portal instance web id

The default company web id is liferay.com and it goes without saying you should change it unless you are actually deploying liferay.com. You can do this simply by setting company.default.web.id property in your portal-ext.properties. This must be done before you start your portal and let it generate the database.

Encryption algorithm

By default Liferay is configured to use 56bit DES encryption algorithm. I believe this legacy is due to US encryption export laws. The problem with 56bit DES is that it was cracked back in the 90s and is not considered secure encryption anymore. Liferay encrypts certaing things with this like your password in Remember Me cookie. If someone get’s a hold of that cookie they can crack your password. I would recommend using at least 128bit AES. To do that you’ll just need to set following properties before starting your portal against a clean database.

company.encryption.algorithm=AES

company.encryption.key.size=128

Password hashing

Recently there has been a lot of sites that have their passwords being compromised because they weren’t using salt with their password hash. Liferay by default uses SHA-1 to hash your password. That hash is a one way algorithm that doesn’t allow reversing the password from the hash but if someone gets a hold of your password hash it’s still possible to crack with brute force or by using rainbow tables. Rainbow tables are precalculated hashes that allow very easily and fast find unsalted passwords. Salt is something we add to the password before hashing it and it’s preferrable unique of each password so that even if two users have the same password their hash is different. Liferay comes with SSHA algorithm that salts the password before calculating the SHA-1 hash from it. You can enable it by setting following in your portal-ext.properties

password.encryption.algorithm=SSHA

Unused SSO hooks

The default Liferay bundle comes with all SSO hooks included even thought they are not all enabled it’s a good idea to remove any hooks your are not using. There’s a property called auto.login.hooks and you should remove all hooks your are not using. Also remember to disable their associated filters.

Unused Remote APIs

Liferay has several different remote APIs such as JSON, JSONWS, Web service, Atom, WebDAV, Sharepoint etc. You should go through them and disable everything your site is not using. Please note that some Liferay builtin portlets rely on some of these APIs. All the APIs are accessible under /api URL.

Mixed HTTP and HTTPS

Everyone should by now know about Firesheep a firefox extension that allows an attacker to sniff a wifi network they are connected to and hijack a users authenticated session. This attack can compromise any website that doesn’t use all authenticated traffic over https. If you use https for just part of the site and your users can access rest of the site as authenticated user over http then your are vulnerable to Firesheep attack. This is especially bad with Liferay if you are using the default encryption and you use Remember me functionality because then the attacker could even compromise your password and use it login to any system where you use the same password. I’m sad to say that even Liferay.com is vulnerable to this attack.

Shared Secrets

Don’t forget to change any shared secrets. The auth.token.shared.secret has a default value that you want to change so that no-one can try to exploit it. This tip came from Jelmer who has found many vulnerabilities in Liferay. Another one you don’t want to overlook is auth.mac.shared.key which has default value of blank. That one is relevant if you auth.mac.allowset to true.

This is not an exhaustive list but this should make your Liferay installation much more secure than it is by default. For more tips on what to configure before going to production check out Liferay whitepapers. You should especially read the deployment checklist. If you can think of any other things that should be on this list comment them or tweet them to me @koivimik

Update: Added shared secret tip from Jelmer